-

Type:

Problem report

-

Resolution: Fixed

-

Priority:

Trivial

-

Affects Version/s: 4.0.13

-

Component/s: Agent (G)

-

Sprint 58 (Nov 2019), Sprint 59 (Dec 2019), Sprint 60 (Jan 2020), Sprint 61 (Feb 2020), Sprint 62 (Mar 2020)

-

1

Random repeated log reads detected by Zabbix agent on Windows platforms having a log from a Java based custom application. Makes log items unreliable.

Environment:

Windows 10 tablets.

NTFS file system

Zabbix Agent 4.0.3

Log written by a Java application.

Items:

log.count[C:\Logs\Console.log,recieved INSERT,,,skip] 1m 30d 365d Zabbix agent (active) log.count[C:\Logs\Console.log,recieved REMOVE,,,skip] 1m 30d 365d Zabbix agent (active) log[C:\Logs\Console.log,detected,,20,skip] 30s 30d Zabbix agent (active) vfs.file.size[C:\Logs\Console.log] 0 30d 365d Zabbix agent vfs.file.time[C:\Logs\Console.log,change] 0 30d 365d Zabbix agent vfs.file.time[C:\Logs\Console.log,modify] 0 30d 365d Zabbix agent vfs.file.time[C:\Logs\Console.log,access] 0 30d 365d Zabbix agent

Some documentation:

https://www.zabbix.com/documentation/4.0/manual/config/items/itemtypes/log_items#important_notes

The server and agent keep the trace of a monitored log's size and last modification time (for logrt) in two counters. Additionally:

The agent also internally uses inode numbers (on UNIX/GNU/Linux), file indexes (on Microsoft Windows) and MD5 sums of the first 512 log file bytes for improving decisions when logfiles get truncated and rotated.

On UNIX/GNU/Linux systems it is assumed that the file systems where log files are stored report inode numbers, which can be used to track files.

On Microsoft Windows Zabbix agent determines the file system type the log files reside on and uses:

On NTFS file systems 64-bit file indexes.

File size and modification times captured by the same agent:

Timestamp Size Modify Access 2019-09-30 03:19:30 56518 1569802590 1569802590 2019-09-30 03:19:00 56518 1569802590 1569802590 2019-09-30 03:18:31 56518 1569802590 1569802590 2019-09-30 03:18:00 56518 1569802590 1569802590 2019-09-30 03:17:31 56518 1569802590 1569802590 2019-09-30 03:17:01 56518 1569802590 1569802590 2019-09-30 03:16:31 56398 1569802514 1569798989 2019-09-30 03:16:01 56398 1569802514 1569798989

Agent log:

4404:20190930:031841.962 after changing modification time the size of log file "C:\Logs\Console.log" still has not been updated, consider it to be a new file 4404:20190930:031736.810 the modification time of log file "C:\Logs\Console.log" has been updated without changing its size, try checking again later

Steps to reproduce:

Not known. (yet)

Expected:

As the log is not rotated and the timestamps not modified as far as the monitoring is detecting, no repeated log read should happen.

Workaround:

Although the log file in question is not a candidate for logrt item (file name remains the same after rotation, timestamp is appended to the rotated file afterwards), here are the item keys that completely eliminates the repeated reads in the given example:

logrt["C:\Logs\Console\.log$",detected,,20,skip,,,copytruncate] logrt.count[C:\Logs\Console.log,recieved INSERT,,,skip,,copytruncate]

Apparently copytruncate option adds additional layer of verification if the log file is new or not, resulting in much better handling. Expected outcome of this task would be the same level of detection in normal log items too. And additionally an explanation (if known) what could possibly be improved in the Java applications to eliminate this behavior.

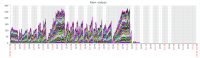

See the attached screenshot which illustrates the number of hourly repeated log reads across 88 devices before with log items and after logrt - copytruncate workaround was introduced.