-

Problem report

-

Resolution: Cannot Reproduce

-

Trivial

-

None

-

None

-

None

-

None

-

Frontend OS: Red Hat 8.6

DB OS: Red Hat 8.6

Postgresql v.14 (8CPU, 32GB RAM, data: 146GB)

TimescaleDB: 2.7.2

Zabbix Server: 6.0.11

Zabbix Proxy: 6.0.12

Tested frontend versions: 6.0.9,6.0.10,6.0.11,6.0.12

I had Zabbix environment on version 6.0.9 (number of hosts: 6k, items: 340k, triggers: 148k, vps:1900). After upgrade to version 6.0.12 CPU on PostgreSQL server increase to terrible values. After invesigation I found that there was created a lot of locks on database which cannot be proceeded (I don't know why, but LLD workers are rising up after frontend upgrade too). After downgrade only frontend to 6.0.9 then everything backs to normal.

I tried every newest version, but only with frontend v.6.0.9 everything backs to normal (checked few times).

Steps to reproduce:

- Install Zabbix 6.0.9 with PostgreSQL 14 + TimescaleDB 2.7.2

- Upgrade to version bigger than 6.0.9

- Watch CPU load on Database server

- Downgrade only frontend to 6.0.9

- Watch CPU load on Database server

Result:

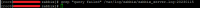

See screenshots.

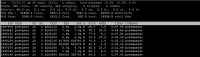

LLD workers are rising up.

CPU load on PostgreSQL server rising up to very big values.

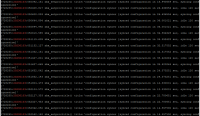

A lot of slow queries are visible in Zabbix Server log file.

A lot of locks are created on database.

Configuration syncer sync time often is very big (even more than 20 seconds).

Expected:

Database should work fine without new additional locks (like in version 6.0.9).