-

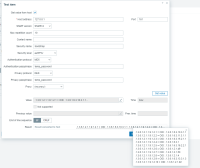

Type:

Change Request

-

Resolution: Fixed

-

Priority:

Major

-

Affects Version/s: 3.4.7

-

Component/s: Proxy (P), Server (S)

-

Sprint 92 (Sep 2022), Sprint 93 (Oct 2022), Sprint 94 (Nov 2022), Sprint 95 (Dec 2022), Sprint 96 (Jan 2023)

-

4

I would like to ask for improvements in bulk processing of SNMP packets. I know how internally it works (https://www.zabbix.com/documentation/3.4/manual/config/items/itemtypes/snmp#internal_workings_of_bulk_processing) but this isn't working well in my environment.

I'm trying to LLD with big Cisco ASR9k router which contain thousands of interfaces.

From command line:

$ snmpbulkwalk -mall -v2c -c community router.net ifName

I'm able to get all 2k+interfaces within few seconds. Net-SNMP by default using max-repeaters 10. Playing with switch -Cr<NUM> getting better result when <NUM> is bigger, for example 100, it's still possible to get response in one packet.

But, Zabbix using their own logic. Started slowly using low max_vars in function zbx_snmp_walk (zabbix_server/poller/checks_snmp.c) and then after some succesfuly retrieved responses increase max_vars. According to tcpdump, in my case it reached max. 15, then SNMP daemon started responding slowly (maybe because of previous small requests), then Zabbix decrease max_vars which is even worse and whole LLD failed.

Global timeout in poller configuration is at max.30s which is also not very good.

Disable of bulk processing for this device is no-go, because snmpwalk over ifName tree tooks ~2m30s (thousands interfaces)..

So, what I like to expect is - having some possibility of fine tunning SNMP bulk processing.

Suggestions:

- ability to disable dynamic change max-repeaters

- have possibility to define static value for max-repeaters (fine tunning for response)

- implement it at same user interface when disabling bulk request is possible

- global config parameter for zabbix_server

Please, take a look on it. Thanks

- causes

-

ZBXNEXT-8009 JSONpath optimizations

-

- Closed

-

-

ZBX-26197 Max repetition count is not replicated to SNMP host created from host prototype

-

- Closed

-

- depends on

-

ZBX-19775 [bulk processing] reccuring tooBig error-status

-

- Confirmed

-

- is duplicated by

-

ZBXNEXT-5080 allow to tune SNMP bulk mode for zabbix user - adjust MAX_SNMP_ITEMS on host interface level

-

- Closed

-

- part of

-

ZBXNEXT-7786 Add possibility to set *context* EngineID to snmp interface

-

- Open

-

1.

|

Update template windows_snmp |

|

Closed | Kristaps Naglis |

2.

|

Update template linux_snmp |

|

Closed | Kristaps Naglis |

3.

|

Update template cisco_snmp |

|

Closed | Kristaps Naglis |

4.

|

Update template mikrotik_snmp |

|

Closed | Kristaps Naglis |

5.

|

Update template tplink_snmp |

|

Closed | Kristaps Naglis |