|

Here is some workaround (SQL wrappers for support "old-style" queries from Zabbix 5.2.3). zabbix_tsdb2_workaround.txt

I'm totally not sure if this correct and solves all possible problems, but there is no more query errors at server start and housekeeping.

|

|

bios, thanks, interesting workaround. It lacks a couple of wrappers though - remove_compress_chunk_policy and timescaledb_information.compressed_hypertable_stats. Meanwhile, we are in the process of figuring out the proper solution.

|

|

It is important to update the supported versions of TimescaleDB in the documentation:

https://www.zabbix.com/documentation/current/manual/installation/requirements

|

|

Alex, one thing to note: timescale will only be releasing v2 for newer versions of pgsql going forward, and PgSQL 13 is supposed to fix the memory issues associated with a large number of partitions within a table (eg, timescale on a massive trends table). Timescaledb is to support PgSQL13 "any day now", and at that point, this issue will become a blocker for me to move forward (and finally eliminate the massive performance issues we suffer when we have to update, rather than insert trends).

This issue as I describe was "fixed" in ZBX-16347 (but not really, zabbix just implemented a workaround).

|

|

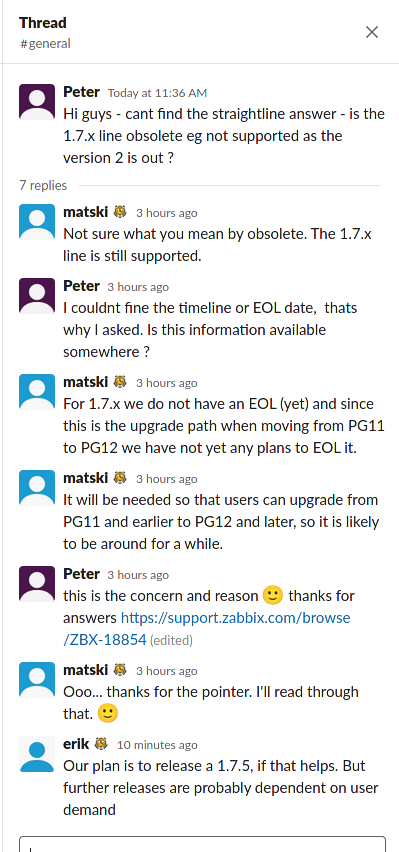

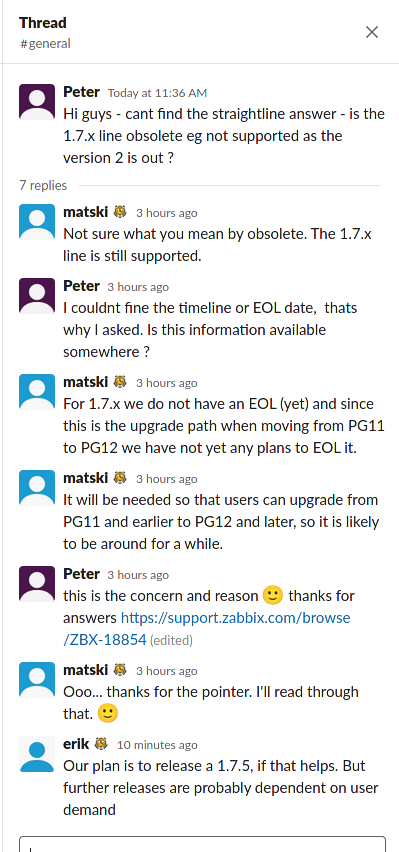

I asked the question on timescale slack - link to the thread bellow

https://timescaledb.slack.com/archives/C4GT3N90X/p1612175768193500

|

|

Hello Aaron,

...and PgSQL 13 is supposed to fix the memory issues associated with a large number of partitions within a table (eg, timescale on a massive trends table).

Are you referencing to the #15847 bug (PostgreSQL bug tracker, related TSDB issue)? This issue should be fixed starting from PgSQL 12 version.

Regards,

Edgar

|

|

Edgar, no. I was referencing the following in the release notes

- Reduce memory usage for query strings and extension scripts that contain many SQL statements (Amit Langote)

as noted in the PgSQL 13 release notes. I can't seem to find the reference, but I recall it being a correction to queries that contained multiple statements the form "UPDATE X; UPDATE Y; UPDATE Z; ...." (as Zabbix does when updating trend data that already exists in the database, as can happen when restarting the zabbix service)

It may be related to https://commitfest.postgresql.org/28/2590/

|

|

Thank you, looks like in additional to 2.0 support should be added information about possible memory issues in certain conditions (PgSQL+TSDB version) and how to avoid them.

|

|

Edgar, I don't want to distract from the core thing here; supporting TS2 is probably the most important thing to me. Zabbix now limits the number of TS shards as a workaround to the "many partitions eats your memory" issue (see resolution of ZBX-16347), but once you have TS2 support, it may be worth revisiting.

|

|

Hello,

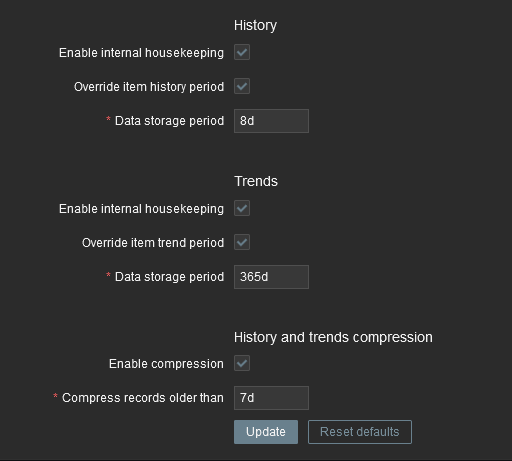

I have a question to this issue. I Migrated my Zabbix environment to ubuntu 20.04 and zabbix 5.0.8 from pg 9.6 to pg12 including timescale 2. To improve Storage usage with compression and fast housekeeping. I suffered from this issue because it is not supported.

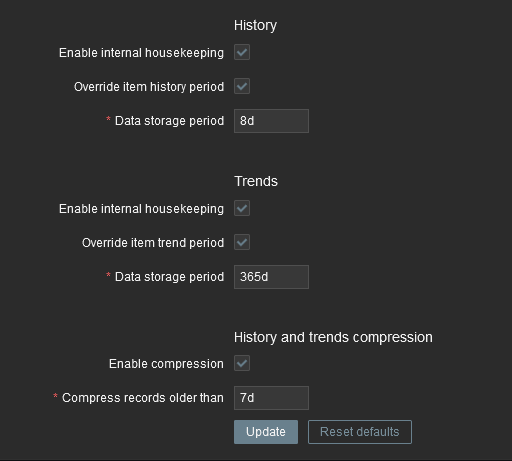

I used the SQL Script from Mikhail from the first comment zabbix_tsdb2_workaround.txt to get rid of the errors on startup to add the housekeeping to the hypertables. There is a typo in this file but it was fine, no errors since then. My History stoage period is 8d but in the logfiles there is even on the 10th day no deleted items to get rid of the errors on startup to add the housekeeping to the hypertables. There is a typo in this file but it was fine, no errors since then. My History stoage period is 8d but in the logfiles there is even on the 10th day no deleted items

1269:20210211:122450.139 executing housekeeper

1269:20210211:122450.831 housekeeper [deleted 0 hist/trends, 0 items/triggers, 4276 events, 108 problems, 2 sessions, 6 alarms, 8 audit, 0 records in 0.678363 sec, idle for 24 hour(s)]

Does this timescale Hypertable housekeeping work? can i update to 5.0.9 if it is released even i have executed the workaround sql file?

|

|

Hi @Eric, it definitely works for me - I'm with Pg12, Timescale 2 with the workaround and I see the DB size going up every day and dropping it in the morning. The housekeeper log says "deleted 0 hist/trends", same as yours.

|

|

@Eric - What was the typo? Has it been reported and fixed in the file? Also, could someone please post instructions on how to apply the patch to the DB? Would be helpful for those who don't know how to use it. Also, is it safe to use this and expect the real patch to still work when it comes out?

My DB is growing like mad right now so I am pretty sure pruning is not happening so the sooner I can get the patch working the better. I am sure the answers to the above questions will help out a lot of folks.

Thanks

|

|

@Michael Spurlock It's plain SQL, you can cat it trough psql just like when creating zabbix db initially -

cat zabbix_tsdb2_workaround.txt | sudo -u zabbix psql zabbix

Or use whatever other means you prefer to run SQL code against your zabbix db, like pgadmin.

The code creates a number of wrapper SQL functions (and some other objects) that accept the Timescale 1.7 calls that Zabbix does and translate them to the proper calls for Timescale 2.

I'd expect once the issue is resolved, Zabbix would detect the version of Timescale and issue the proper calls itself, then these will simply remain ignored - so they should be safe after the update, but unless this is confirmed by somebody on the Zabbix team, I'm not going to rely on this assumption and I will delete the wrappers from my db before I update Zabbix.

|

|

@Velko - Thanks

Any idea what the typo is? It does not run for me:

zabbix@host:~$ cat /tmp/zabbix_tsdb2_workaround.txt | psql zabbix

ERROR: permission denied for schema _timescaledb_catalog

ERROR: syntax error at or near "TO"

LINE 1: ...ER TYPE _timescaledb_catalog.ts_interval OWNER TO TO zabbix;

^

ERROR: type "_timescaledb_catalog.ts_interval" does not exist

LINE 3: (FALSE,NULL,config->>'compress_after')::_timescaledb_catalo...

^

ERROR: relation "_timescaledb_config.bgw_policy_compress_chunks" does not exist

CREATE FUNCTION

ALTER FUNCTION

CREATE FUNCTION

ALTER FUNCTION

|

|

Typo was pretty easy to find

2nd Statement in the 3rd line. There are two "TO" and one of them ist too much.

ALTER TYPE _timescaledb_catalog.ts_interval OWNER TO TO zabbix;

-->

ALTER TYPE _timescaledb_catalog.ts_interval OWNER TO zabbix;

|

|

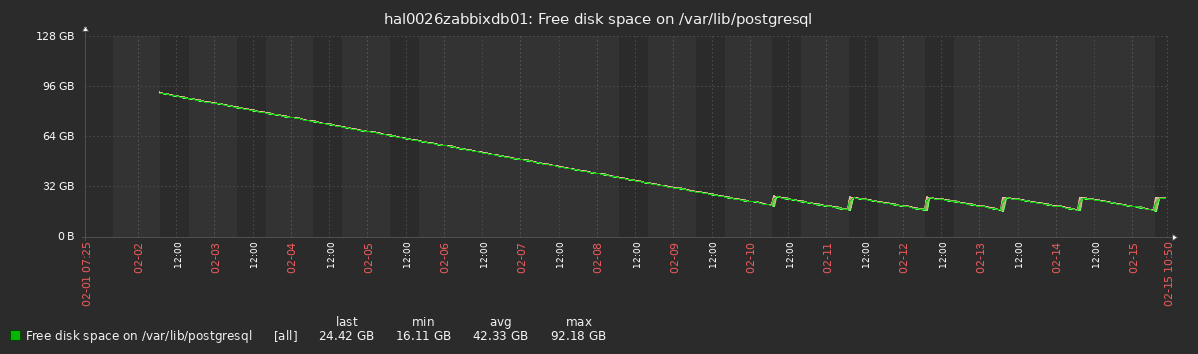

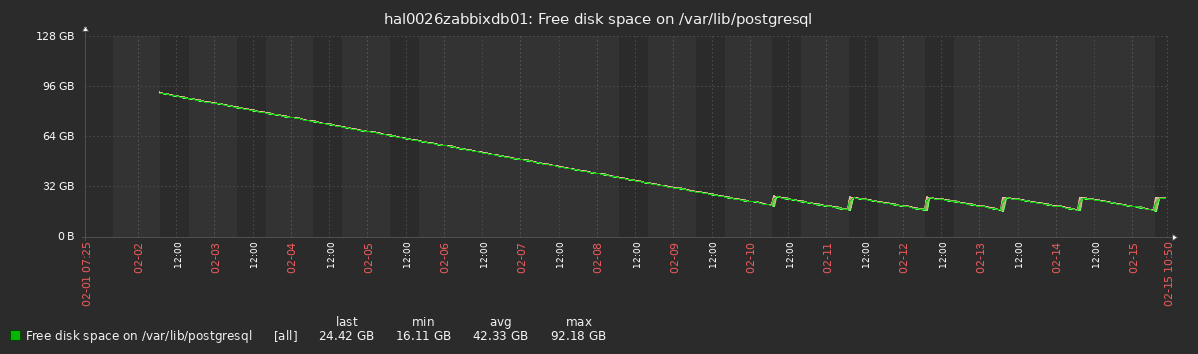

to add something new:

Compressing seems to be working fine

2021-02-15 01:01:04.952 CET [1754373] WARNING: telemetry could not connect to "telemetry.timescale.com"

2021-02-15 01:01:04.952 CET [1754373] DETAIL: Operation now in progress

2021-02-15 08:10:23.977 CET [1854855] LOG: completed compressing chunk _timescaledb_internal._hyper_3_62_chunk

2021-02-15 08:10:36.490 CET [1854914] LOG: completed compressing chunk _timescaledb_internal._hyper_5_59_chunk

2021-02-15 08:10:52.431 CET [1855015] LOG: completed compressing chunk _timescaledb_internal._hyper_4_61_chunk

2021-02-15 08:11:58.017 CET [1855323] LOG: completed compressing chunk _timescaledb_internal._hyper_1_58_chunk

2021-02-15 08:20:39.739 CET [1857917] LOG: completed compressing chunk _timescaledb_internal._hyper_2_60_chunk

from prostgresql.log

/var/lib/postgresql graph

But i cannot say that this is deleting or whether compressing the history table.

Hope that insight helps fixing this issue

|

|

@Michael Spurlock

That's a permission issue, try it with the postgres user

@Eric

It works, my graph looks the same - every day the oldest chunks are dropped, which frees some GB, then it grows the same amount back in the next 24h.

Check your chunks with `SELECT show_chunks('history')` - you will see the oldest ones disappear and new ones get created every day.

|

|

@Velko Ivanov - thanks, that worked. I am all set now. Fingers crossed my DB starts to shrink now...

|

|

Fixed in:

Updated documentation:

|

|

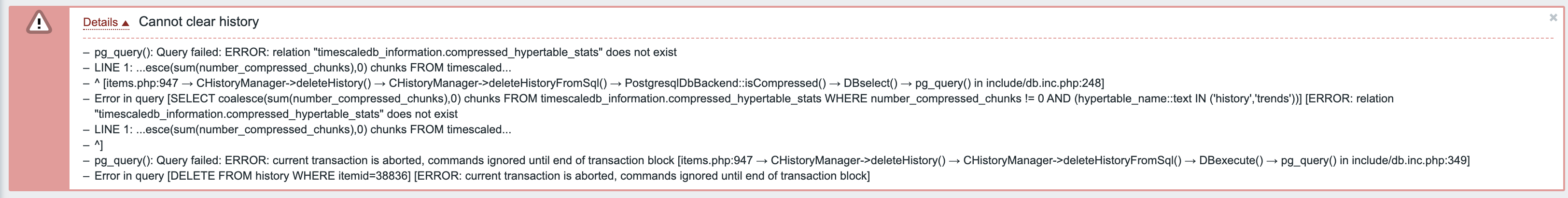

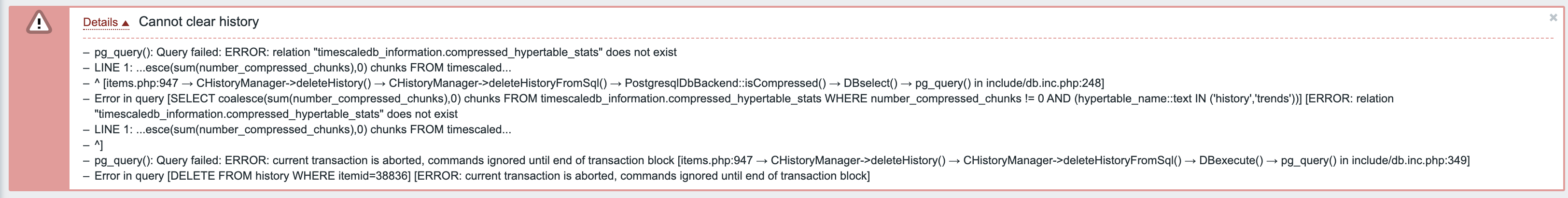

Not sure if related, but now, when I try to clear history in 5.2.5 for a single item, I'm getting following error message:

|

|

giomac It is related and it gets fixed in the latest pre-5.2.6rc1 branch.

|

|

While 5.0.10 is not available yet, is there a SQL command to manually purge Timescaledb from older history?

I tried re-enabling the conventional housekeeping, but it consumes too many CPU and IO resources.

|

|

Hello. I would be interested in a workaround as well. Is the existing attachment zabbix_tsdb2_workaround.txt the official workaround to this issue? Can the workaround cause problems with the update to version 5.0.10? Is there a time estimate when version 5.0.10 will be officially available? the official workaround to this issue? Can the workaround cause problems with the update to version 5.0.10? Is there a time estimate when version 5.0.10 will be officially available?

|

|

zabbix_tsdb2_workaround.txt modifies the database schema, therefore I don't feel like using it. I'd be quite happy with an external SQL query I could run from cron until 5.0.10 is available. I understand that the script should be quite simple revolving around `SELECT drop_chunks()` but I'd better get some first hand knowledge what tables to purge and what the time arguments to drop_chunks() should be there.

|

|

rene.lange, vas_mpeks, zabbix_tsdb2_workaround.txt is no official patch and is incomplete.

Until 5.0.10 is available you can use add_retenction_policy/remove_retention_policy TSDB functions. So the steps would be like:

- Disable housekeeping for history and trends in Zabbix

- Add integer_now function to your schema if it's not added (housekeeper adds it, but only if you use compression):

create or replace function zbx_ts_unix_now() returns integer language sql stable as $$ select extract(epoch from now())::integer $$

- For every history and trends table (that is: history,history_uint,history_text,history_log,history_str,trends,trends_uint) call it like this:

SELECT set_integer_now_func('history', 'zbx_ts_unix_now');

- For every history and trends table set retention policy to the desired period in seconds, e.g.:

SELECT add_retention_policy(relation=>'history',drop_after=>604800);

Where drop_after is a period in seconds. E.g. 604800 == 60 * 60 * 24 * 7 is drop records older than 7 days old.

- NB! Before upgrading to a version with TSDB 2.0 support do not forget to drop the retention policy by calling remove_retention_policy

This is unofficial advice and I didn't test the steps above myself. You should test it in your test environment and see if it works for you before running these steps in production.

|

|

do you know when the update will be released?

I have a very large data gain and can't do anything and i'm out of disk space. How can I fix this before the upgrade comes out ?

|

|

The next release is scheduled for Monday, March 29nd. Release candidates will be available a week earlier.

|

|

Good morning. After the upgrade zabbix timescaledb will need more space for some extra operations or the upgrade will automatically compress without worrying about everything?

|

|

Wszolek, this change is not related to TSDB compression settings. By default, Zabbix turns TSDB compression on for new installations. It does not change compression settings during upgrades.

|

|

We're running Zabbix 5.0 on FreeBSD 12.2 and since the timescaledb version in ports was upgraded to 2.0, we've used the workaround in zabbix_tsdb2_workaround.txt with good results. with good results.

Now we've upgraded to Zabbix 5.0.10, with timescaledb 2 support and we're getting segmentation faults in Postgres. We're running postgresql 11. I tried dropping everything from the workaround, which did not work.

The backtrace from the core dump is not helpful:

# lldb -c /var/db/postgres/data11/postgres.core -- postgres

(lldb) target create "postgres" --core "/var/db/postgres/data11/postgres.core"

Core file '/var/db/postgres/data11/postgres.core' (x86_64) was loaded.

(lldb) thread backtrace all

* thread #1, name = 'postgres', stop reason = signal SIGSEGV

* frame #0: 0x000000090b4f375b timescaledb-tsl-2.2.0.so`___lldb_unnamed_symbol538$$timescaledb-tsl-2.2.0.so + 267

frame #1: 0x000000090b4f335e timescaledb-tsl-2.2.0.so`___lldb_unnamed_symbol537$$timescaledb-tsl-2.2.0.so + 334

frame #2: 0x000000090b45d70b timescaledb-2.2.0.so`___lldb_unnamed_symbol378$$timescaledb-2.2.0.so + 187

frame #3: 0x00000000006d1883 postgres`___lldb_unnamed_symbol895$$postgres + 8419

frame #4: 0x00000000006ce882 postgres`subquery_planner + 3154

frame #5: 0x00000000006cd834 postgres`standard_planner + 308

frame #6: 0x000000090b45ce13 timescaledb-2.2.0.so`___lldb_unnamed_symbol375$$timescaledb-2.2.0.so + 195

frame #7: 0x0000000000781a3c postgres`pg_plan_query + 76

frame #8: 0x00000000007853af postgres`___lldb_unnamed_symbol1422$$postgres + 1087

frame #9: 0x00000000007835f0 postgres`PostgresMain + 3312

frame #10: 0x000000000070d108 postgres`___lldb_unnamed_symbol1101$$postgres + 408

frame #11: 0x000000000070c7b5 postgres`___lldb_unnamed_symbol1098$$postgres + 2917

frame #12: 0x0000000000709e8d postgres`PostmasterMain + 3789

frame #13: 0x00000000006844c8 postgres`main + 952

frame #14: 0x00000000004b4a70 postgres`_start + 256

I've tried running timescaledb.sql again and running ALTER EXTENSION timescaledb UPDATE but nothing works.

Any ideas?

|

|

einsibjani, from this backtrace it seems like you've hit a TSDB bug, please report it to Timescale.

|

Generated at Mon Jun 08 04:06:10 EEST 2026 using Jira 10.3.18#10030018-sha1:5642e4ad348b6c2a83ebdba689d04763a2393cab.